The rise of AI has fundamentally shifted how software systems are conceived, designed, and operated. “Engineering AI Systems: Architecture and DevOps Essentials” by Len Bass, Qinghua Lu, Ingo Weber, and Liming Zhu provides a roadmap for building reliable, secure, and scalable AI systems. This article unpacks the book’s insights, exploring the confluence of AI, software engineering, and societal challenges in deploying responsible AI.

Section 1: Context – The Evolution of AI Engineering

In the early days of software engineering, systems were deterministic, predictable, and largely rule-based. Developers wrote explicit instructions, leaving little room for ambiguity. Fast forward to today, and the landscape has drastically shifted. Artificial Intelligence (AI) systems no longer rely solely on hardcoded rules; they infer, adapt, and learn from data. This transition has brought immense potential but also unprecedented complexity.

The book places this evolution in context, tracing the journey from rule-based symbolic AI to machine learning (ML) and, more recently, to foundation models (FMs). These FMs, like OpenAI’s GPT-4 or Meta’s LLaMA, are trained on vast datasets, capable of generating text, analyzing images, and even making predictions. However, integrating such models into software systems isn’t straightforward. It requires a new discipline: AI engineering.

AI engineering is not merely about building models; it’s about embedding them into robust, scalable, and ethical systems. As Len Bass and his co-authors argue, this requires a fusion of modern software engineering practices, DevOps methodologies, and AI-specific considerations like data quality, model lifecycle management, and fairness.

Section 2: Technical Breakdown – How AI Systems Work

At its core, building an AI system is akin to constructing a skyscraper. The foundation must be strong, the architecture sound, and the operations seamless. The book identifies three pillars of AI engineering: software architecture, DevOps, and high-quality AI models.

1. The Architecture of Intelligence

Software architecture is the blueprint for any system, and AI systems are no exception. Unlike traditional systems, AI systems have two distinct portions: the AI model and the non-AI components. The model handles tasks like predictions or content generation, while the non-AI components manage data pipelines, user interfaces, and system integrations.

The authors emphasize the importance of “architectural tactics” to achieve system qualities like reliability, performance, and security. For instance, redundancy can enhance reliability, while caching improves efficiency. However, in AI systems, these tactics must also account for the probabilistic nature of models. For example, a chatbot may need fallback mechanisms when the model generates uncertain or incorrect responses.

2. DevOps Meets MLOps

DevOps revolutionized software development by automating testing, deployment, and monitoring. In AI systems, this paradigm extends to MLOps (Machine Learning Operations), which focuses on the data and model lifecycle. Key practices include:

- Data versioning: Ensuring reproducibility by tracking changes in datasets.

- Experiment tracking: Logging model training experiments to identify the best configurations.

- Continuous monitoring: Detecting data drift or model degradation in real-time.

3. Foundation Models and Their Ecosystem

The book dedicates significant attention to foundation models (FMs), highlighting their transformative potential and challenges. These models, based on transformer architectures, rely on attention mechanisms to process data contextually. While their capabilities are vast, they are resource-intensive and require customization techniques like fine-tuning or retrieval-augmented generation (RAG).

For instance, RAG enhances FMs by integrating external knowledge bases. Imagine a customer service chatbot that retrieves company-specific FAQs to answer queries accurately—a practical application of RAG.

Section 3: Case Studies – AI in Action

The book brings its theories to life through real-world examples. Here are three standout case studies:

1. Automating Tender Responses with AI

Fraunhofer, a leading research organization, tackled the tedious process of matching product requirements in tenders to a company’s catalog. By deploying a BERT-based language model, they automated the recommendation of products, significantly reducing manual effort. The system was complemented by rules and heuristics to ensure accuracy and consistency.

2. Chatbots for Australian SMEs

The Advanced Robotics for Manufacturing (ARM) Hub in Australia developed a knowledge-based chatbot for small and medium enterprises (SMEs). Leveraging RAG, the chatbot integrates company-specific documents into its responses, addressing the unique challenges of SMEs without large IT teams. The result is a scalable, cost-effective solution tailored to manufacturing needs.

3. Predicting Customer Churn in Banking

In the banking sector, customer churn is a critical metric. The book details how a bank used machine learning models to predict churn, enabling proactive interventions. The system combined traditional data analytics with AI, offering a glimpse into hybrid architectures.

Section 4: Ethical Debate – Challenges and Trade-offs

AI systems are not just technical constructs; they are socio-technical systems with profound ethical implications. The book delves into two critical areas: privacy and fairness.

Privacy

AI systems often process sensitive data, raising questions about compliance with regulations like GDPR. The authors advocate for techniques like differential privacy, which adds noise to data to protect individual identities while preserving utility. However, implementing such measures requires trade-offs in accuracy and performance.

Fairness

Bias in AI is a well-documented issue. For example, recruitment algorithms trained on historical data may perpetuate gender or racial biases. The book suggests metrics like equalized odds to evaluate fairness but acknowledges the complexity of balancing fairness with other objectives like efficiency.

The Role of Guardrails

To mitigate risks, the authors propose “guardrails”—mechanisms to monitor and control AI systems. These include:

- Input guardrails to filter harmful queries.

- Output guardrails to prevent biased or inappropriate responses.

- Continuous monitoring to detect and address issues in real-time.

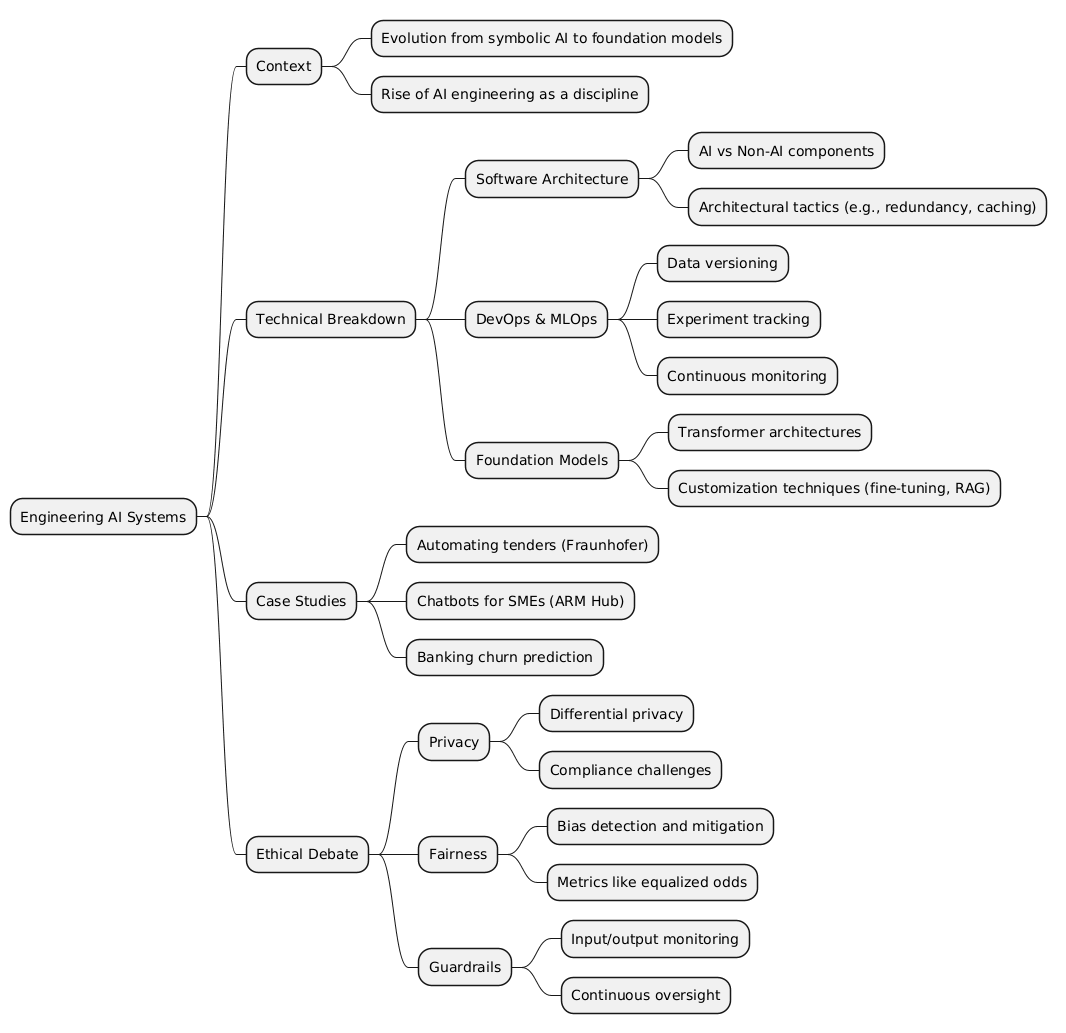

Mind Map

Key Takeaways

💡 AI engineering bridges software and intelligence, requiring a fusion of traditional software practices with AI-specific techniques.

⚠️ Foundation models are powerful but resource-intensive, necessitating careful customization and ethical oversight.

🔍 Case studies illustrate practical applications, from chatbots to predictive analytics, showcasing AI’s transformative potential.

💡 Ethical considerations like privacy and fairness are paramount, demanding robust frameworks and guardrails.

⚠️ MLOps is essential for scalable, reliable AI systems, ensuring continuous monitoring and adaptability.