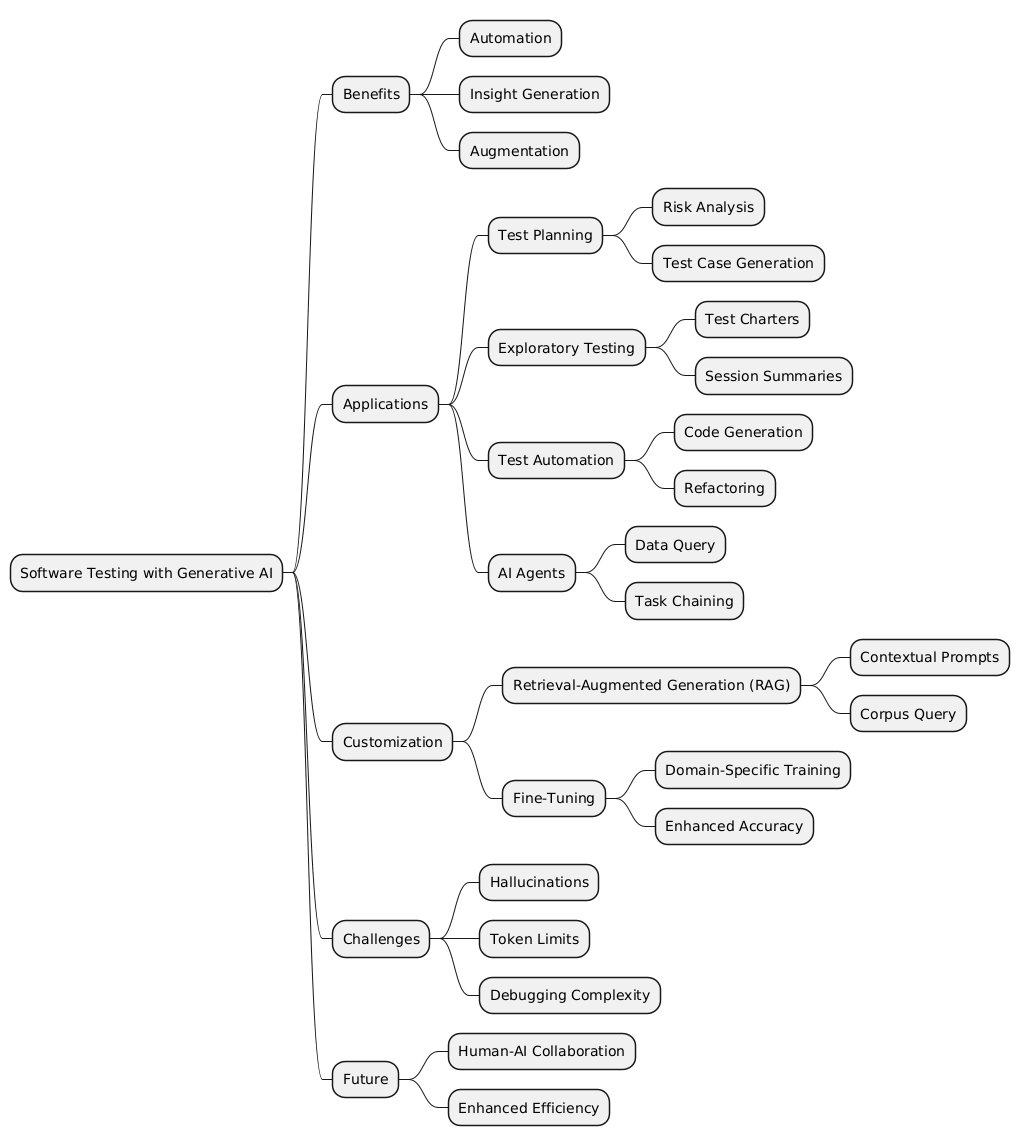

Generative AI is transforming the landscape of software testing by automating tasks, generating insights, and augmenting human capabilities. This article explores how large language models (LLMs) like ChatGPT and GitHub Copilot are reshaping the field, offering practical examples and strategies for integrating AI into testing workflows. From test planning to exploratory testing and AI agents, we delve into how generative AI enhances efficiency, accuracy, and innovation in software quality assurance.

1. Introduction to Generative AI in Software Testing

Generative AI, powered by large language models (LLMs), is redefining how software testing is approached. These AI systems, trained on vast datasets, can generate natural language responses, code snippets, and even creative solutions to complex problems. By leveraging tools like ChatGPT and GitHub Copilot, testers can streamline workflows, improve accuracy, and focus on higher-level tasks.

Key Benefits

- Automation: Reduces repetitive tasks like test data generation.

- Insight Generation: Suggests risks, test cases, and strategies.

- Augmentation: Enhances human decision-making with AI-driven insights.

2. Test Planning with AI Support

The Challenge

Traditional test planning often involves significant manual effort, requiring testers to identify risks, design test cases, and ensure comprehensive coverage. Generative AI simplifies this by analyzing user stories, requirements, and historical data to suggest test ideas.

Example

A user story for a file upload feature:

- AI-generated risks include testing for valid formats (PDF, DOCX), size limits (20MB), and security vulnerabilities (malicious files).

How It Works

- Input: Provide the user story or requirement.

- Processing: AI analyzes the text and generates risks and test cases.

- Output: A detailed list of test scenarios tailored to the context.

3. Exploratory Testing with AI

Exploratory testing focuses on uncovering unexpected issues by interacting with the software in creative ways. Generative AI can assist by:

- Generating test charters.

- Suggesting edge cases.

- Summarizing session notes into actionable insights.

Example

During a testing session for a booking system:

- AI suggests testing responsiveness across devices and edge cases for overlapping reservations.

- Results include identifying bugs in calendar navigation and performance issues.

4. AI-Assisted Test Automation

GitHub Copilot in Action

GitHub Copilot, an AI-powered coding assistant, accelerates test automation by:

- Generating boilerplate code for test frameworks.

- Suggesting helper functions for UI automation.

- Improving documentation with inline comments.

Example

Using Copilot for a timesheet manager:

- Unit Test Creation: AI generates test cases for time tracking.

- Code Suggestions: AI provides production code snippets based on test cases.

- Refactoring: AI identifies redundant code and suggests improvements.

5. AI Agents as Testing Assistants

AI agents, powered by LLMs, can autonomously perform testing tasks:

- Query databases for relevant test data.

- Chain tasks together, such as creating test data and analyzing results.

- Adapt to new requirements by learning from interactions.

Example

An AI agent for a hotel booking system:

- Creates room and booking data.

- Analyzes database consistency.

- Suggests improvements based on anomalies detected.

6. Customizing LLMs for Context

Retrieval-Augmented Generation (RAG)

RAG enhances LLMs by adding relevant context to prompts:

- Queries a corpus of documents for relevant data.

- Combines user input with contextual data to improve accuracy.

Example

Generating test cases for a branding endpoint:

- RAG retrieves user stories and API documentation.

- AI generates risks tailored to the endpoint’s JSON schema.

Fine-Tuning

Fine-tuning involves training an LLM on specific datasets to align it with domain-specific needs:

- Embeds organizational terminology and workflows.

- Improves accuracy for repetitive tasks.

Example

Fine-tuning an LLM for e-commerce testing:

- Trained on historical bug reports and user feedback.

- AI suggests targeted test cases for checkout flows and payment integrations.

7. Challenges and Considerations

Limitations

- Hallucinations: AI may generate plausible but incorrect responses.

- Token Limits: Context windows restrict the amount of data processed.

- Debugging Complexity: AI-generated outputs require careful validation.

Mitigation Strategies

- Combine RAG and fine-tuning for enhanced accuracy.

- Use human oversight to validate AI outputs.

- Continuously update datasets to reflect evolving requirements.

8. The Future of AI in Testing

Generative AI is not a replacement for human testers but a powerful augmentation tool. By automating mundane tasks, suggesting innovative ideas, and accelerating workflows, AI empowers testers to focus on strategic aspects of quality assurance. As AI tools evolve, their integration into testing pipelines will become increasingly seamless, driving efficiency and innovation.

Conclusion

Generative AI is transforming software testing by automating repetitive tasks, enhancing exploratory testing, and enabling smarter test planning. As testers embrace tools like ChatGPT and GitHub Copilot, they unlock new possibilities for efficiency and innovation, paving the way for a future where human ingenuity and AI capabilities work hand in hand.