- Generative AI is reshaping time series forecasting, offering precision and adaptability across industries.

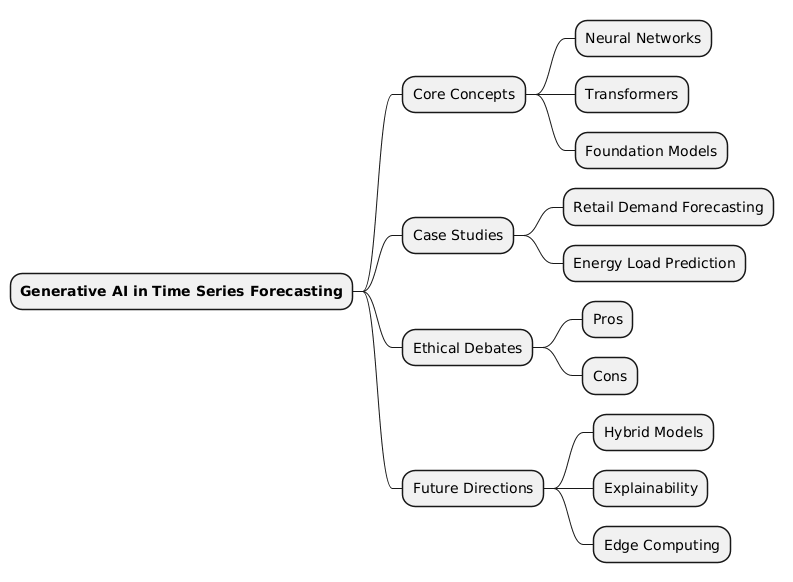

- By integrating neural networks, transformers, and foundation models, the book explores cutting-edge techniques for making predictions.

- This article unpacks the book’s technical insights, real-world applications, ethical considerations, and the future of AI-driven forecasting.

Introduction & Context

In the world of artificial intelligence, few challenges are as pervasive and impactful as time series forecasting. From predicting stock market trends to anticipating energy demands, time series data is the backbone of decision-making across industries. Historically, techniques like ARIMA and exponential smoothing dominated this space. However, the advent of machine learning, and more recently, generative AI, has revolutionized how we approach these problems.

The book Time Series Forecasting Using Generative AI by Banglore Vijay Kumar Vishwas and Sri Ram Macharla serves as a guide to this transformation. It chronicles the evolution from traditional statistical methods to state-of-the-art neural networks and foundation models like TimeGPT. The authors contextualize this shift within broader technological advancements, such as the rise of transformers in natural language processing (NLP) and their adaptation for sequential data.

Today, generative AI is not just a tool but a paradigm shift. It empowers industries to make predictions with unprecedented accuracy, even in the face of sparse or noisy data. This article delves into the book’s technical depth, real-world applications, and ethical implications, offering a comprehensive view of how generative AI is shaping the future of forecasting.

Technical Breakdown

At the heart of the book lies a deep dive into the technical machinery powering modern time series forecasting. The authors meticulously explain concepts ranging from neural networks to advanced transformer architectures. Let’s unpack these layers.

Neural Networks: The Foundation

Neural networks like RNNs (Recurrent Neural Networks) and LSTMs (Long Short-Term Memory networks) were early game-changers in time series forecasting. Unlike traditional methods, these models could capture temporal dependencies and handle nonlinear relationships. For instance:

- RNNs process sequential data by feeding the output of one step as input to the next, creating a “memory” of past data points.

- LSTMs address RNNs’ limitations, such as vanishing gradients, by introducing gates that control the flow of information.

The book illustrates these concepts with practical examples, such as predicting monthly air passenger traffic—a classic dataset in time series analysis.

Transformers: A Paradigm Shift

Transformers, originally designed for NLP, have redefined sequence modeling. Their self-attention mechanism allows models to focus on relevant parts of the input, making them ideal for capturing long-range dependencies in time series data. The book explores several transformer variants:

- Vanilla Transformers: The foundational architecture, adapted for time series by modifying input embeddings and attention mechanisms.

- PatchTST: A model that segments time series into patches, enabling efficient processing and improved accuracy.

- DLinear and NLinear: Lightweight models that outperform complex architectures in scenarios with clear trends and seasonality.

Foundation Models: The Future of Forecasting

Foundation models like TimeGPT and MOIRAI represent the next frontier. Trained on billions of data points across diverse domains, these models offer zero-shot forecasting capabilities. They can generalize to unseen datasets without additional training, making them versatile tools for industries ranging from finance to healthcare.

The book also introduces innovative techniques like patch-based input representation and masked encoding, which enhance the performance of these models. For example, TimeGPT uses local positional encoding to capture temporal patterns, while MOIRAI employs multi-patch sizes to handle datasets with varying frequencies.

Case Studies

The book brings its theories to life with compelling real-world applications. Here are two standout examples:

1. Retail Demand Forecasting

A retail giant faced challenges in predicting product demand during holiday seasons, where traditional models failed due to erratic consumer behavior. By implementing DeepAR, a probabilistic forecasting model discussed in the book, the company achieved significant improvements. DeepAR leveraged RNNs to learn from related time series, such as sales data from similar products, providing robust predictions even for new items.

2. Energy Load Prediction

Energy providers often struggle with balancing supply and demand, especially during extreme weather conditions. Using TimeGPT, an energy company was able to forecast electricity consumption with high precision. The model’s ability to handle multivariate inputs, such as temperature and humidity, alongside historical consumption data, proved invaluable. The result: reduced operational costs and enhanced grid stability.

Ethical Debate

As with any transformative technology, generative AI in time series forecasting raises ethical questions. The book addresses these issues with a balanced perspective.

Pros:

- Increased Efficiency: By automating complex forecasting tasks, generative AI reduces human effort and accelerates decision-making.

- Improved Accuracy: Models like MOIRAI and TimeGPT outperform traditional methods, minimizing errors in critical applications such as healthcare and disaster management.

- Scalability: Foundation models can handle diverse datasets, making them accessible to industries of all sizes.

Cons:

- Data Privacy: The reliance on large-scale data raises concerns about the ethical use of sensitive information.

- Bias and Fairness: Models trained on biased datasets may perpetuate inequalities, such as underestimating demand in underserved communities.

- Over-Reliance on Technology: The black-box nature of AI models can lead to over-reliance, potentially sidelining human expertise.

The authors advocate for transparency, robust evaluation metrics, and ethical guidelines to mitigate these risks.

Future Directions

The book concludes with a forward-looking perspective, identifying areas ripe for innovation:

- Hybrid Models: Combining traditional statistical methods with generative AI to leverage the strengths of both approaches.

- Explainability: Developing interpretable models to build trust and facilitate human-AI collaboration.

- Edge Computing: Deploying lightweight models like DLinear on edge devices for real-time forecasting in IoT applications.

- Synthetic Data: Using generative models to create synthetic datasets for training, addressing data scarcity in niche domains.

- Ethical AI: Establishing global standards for the ethical use of AI in forecasting.

Mind Map

Key Takeaways:

- 💡 Insightful Idea: Generative AI transforms time series forecasting by capturing complex patterns and dependencies.

- ⚠️ Warning: Ethical concerns, such as data privacy and bias, must be addressed to ensure responsible AI deployment.

- 🔍 Key Detail: Foundation models like TimeGPT offer zero-shot forecasting, enabling predictions for unseen datasets.

- 🚀 Future Opportunity: Innovations in hybrid models and synthetic data generation could redefine the field.

- 🌍 Societal Impact: Accurate forecasting can drive efficiency and sustainability across industries, from energy to retail.