This article explores the fascinating world of Multilingual Artificial Intelligence (AI), a field that combines language processing, machine learning, and cultural understanding to enable seamless communication across languages. We begin by defining key concepts such as symbolic AI (rule-based systems) and deep learning (neural networks), then delve into large language models (LLMs) like ChatGPT and their applications in translation, information retrieval, and cultural adaptation.

We examine how AI processes linguistic data, the challenges of low-resource languages, and the role of prompt engineering and fine-tuning in improving model performance. Finally, we discuss the cultural implications of AI, including biases in training data and the need for multicultural AI that respects diverse perspectives.

1. Introduction: What is Multilingual AI?

Artificial Intelligence (AI) has revolutionized how we interact with technology, particularly in language-related tasks. Multilingual AI refers to AI systems capable of understanding, processing, and generating text in multiple languages. Unlike traditional AI models limited to a single language, multilingual AI can:

- Translate between languages (e.g., Google Translate).

- Retrieve information across languages (e.g., multilingual search engines).

- Adapt responses based on cultural context (e.g., chatbots that adjust tone based on region).

Example:

When you ask ChatGPT a question in French, it doesn’t just translate your words into English—it understands the meaning and generates a coherent response in French. This is possible because the model was trained on vast amounts of multilingual data.

2. Symbolic AI vs. Deep Learning: Two Approaches to Language Processing

A. Symbolic AI (Rule-Based Systems)

- Definition: Uses predefined rules (e.g., grammar, dictionaries) to process language.

- Strengths:

- Precise for structured tasks (e.g., parsing sentences).

- Interpretable (humans can trace how decisions are made).

- Limitations:

- Struggles with ambiguity (e.g., slang, idioms).

- Requires manual rule creation (labor-intensive).

B. Deep Learning (Neural Networks)

- Definition: Learns patterns from data without explicit rules.

- Strengths:

- Handles ambiguity well (e.g., understands “bank” as a financial institution or river edge).

- Adapts to new languages with sufficient data.

- Limitations:

- Requires massive datasets.

- “Black box” nature makes it hard to debug.

Example:

- Symbolic AI: Early translation systems (e.g., 1990s rule-based translators) failed with idiomatic phrases like “kick the bucket” (meaning “to die”).

- Deep Learning: Modern LLMs like Google’s Neural Machine Translation (GNMT) infer meaning from context, improving accuracy.

3. How Do Large Language Models (LLMs) Work?

LLMs like GPT-4 and BERT rely on:

A. Tokenization

- Splits text into smaller units (e.g., words or subwords).

- Example: “Unhappiness” → [“un”, “happiness”].

B. Word Embeddings

- Converts words into numerical vectors (e.g., “king” – “man” + “woman” ≈ “queen”).

C. Transformer Architecture

- Uses self-attention to weigh word importance in a sentence.

- Example: In “The cat sat on the mat,” the model focuses on “cat” and “mat” to understand the relationship.

D. Training Process

- Pre-training: Learns general language patterns (e.g., predicting missing words).

- Fine-tuning: Adapts to specific tasks (e.g., translation, summarization).

4. Challenges in Multilingual AI

A. Low-Resource Languages

- Languages like Swahili or Nepali lack sufficient training data.

- Solution: Cross-lingual transfer learning (using knowledge from high-resource languages like English).

B. Cultural Biases

- Models trained on Western data may misinterpret non-Western contexts.

- Example: An AI might associate “wedding” with white dresses, ignoring cultural variations (e.g., red in Chinese weddings).

C. Ambiguity in Translation

- Words like “you” can be formal or informal (e.g., French “tu” vs. “vous”).

5. Applications of Multilingual AI

A. Machine Translation

- Example: DeepL provides more natural translations than older systems by using deep learning.

B. Information Retrieval

- Example: A researcher queries in Spanish, and the system retrieves relevant English papers.

C. Chatbots & Virtual Assistants

- Example: Amazon Alexa responds differently in Japanese (polite tone) vs. English (casual tone).

6. The Future: Toward Multicultural AI

Future AI must:

- Reduce biases (e.g., avoid stereotyping genders in job descriptions).

- Incorporate cultural context (e.g., recognizing religious holidays in responses).

- Support endangered languages (e.g., AI tools for indigenous languages).

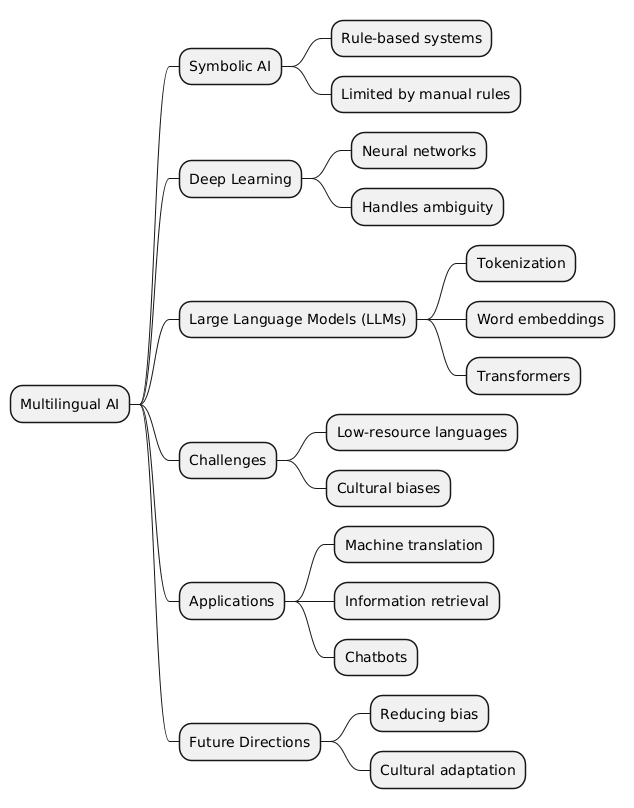

Mind Map

Conclusion

Multilingual AI is transforming global communication, but challenges remain in fairness and cultural sensitivity. By combining deep learning advancements with human oversight, we can build AI that truly bridges languages and cultures.